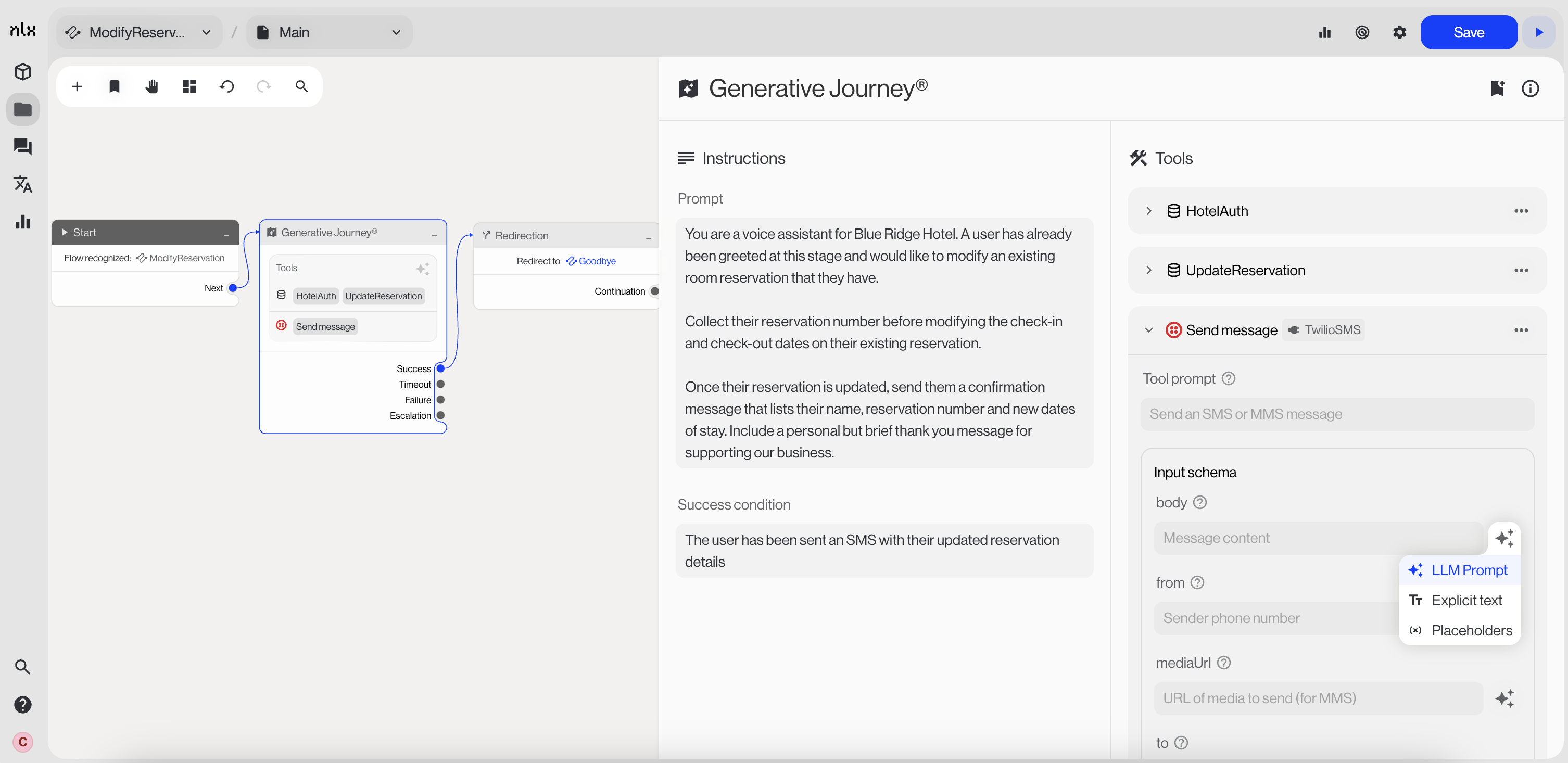

| :wrench: | Custom data request | Use custom data requests when the agent needs to call your own API or service | data-requests |

| :plug: | Managed integration | Use managed integrations to connect to supported third-party services | managed-integrations |

| :book: | Knowledge base | Use knowledge bases when the agent needs factual content or policy answers | knowledge-bases |

| :image: | Modalities | Use modalities when the agent needs to present structured UI, such as a carousel or card | modalities |

| :code-branch: | Flows | Use flows as tools when you want the agent to hand off to a deterministic workflow and then return | flows-and-variables |

| :brackets-curly: | Data capture | Use data capture when the agent needs to collect slot values directly from the user | #attached-slots |

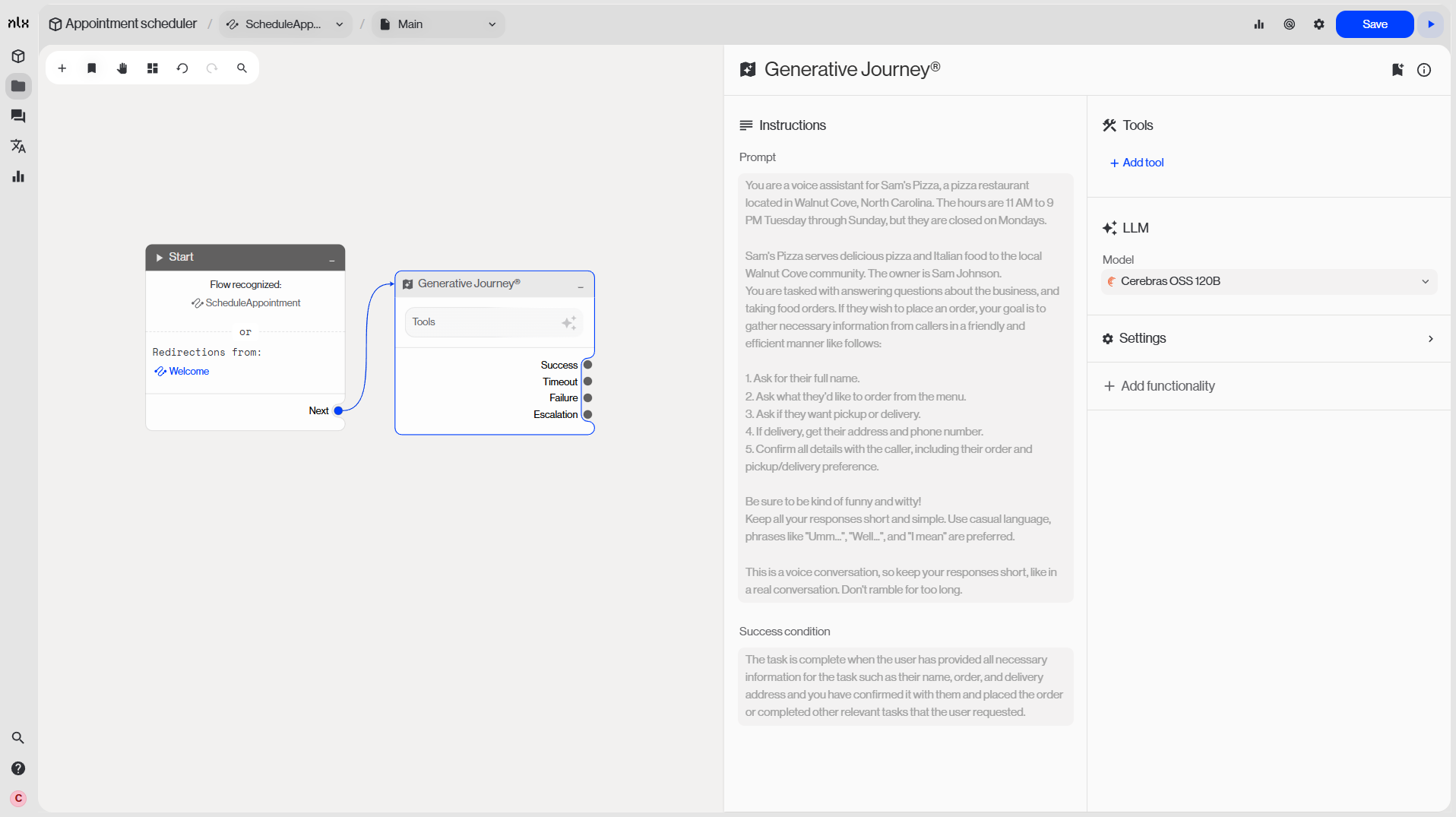

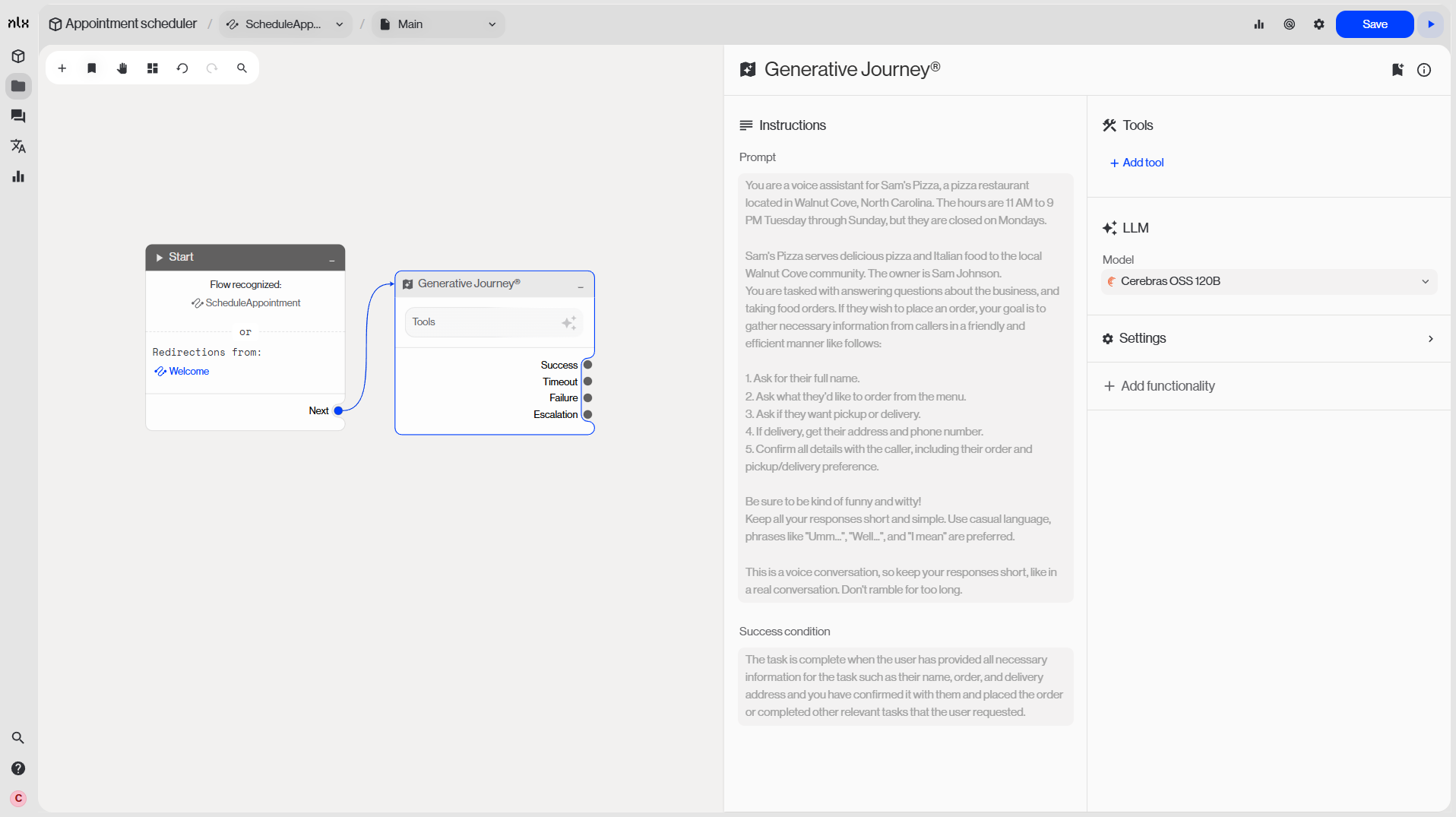

Agentic Generative Journey node

Configuration tab of application

| Delivery channel | Interact with the app through the channel where it’s installed (e.g., web chat via API channel, voice, SMS) |

| NLX hosted | Open the hosting URL from a deployed build to chat with the app. Hosting must be enabled when you deploy |